Quantitative Research at Jane Street

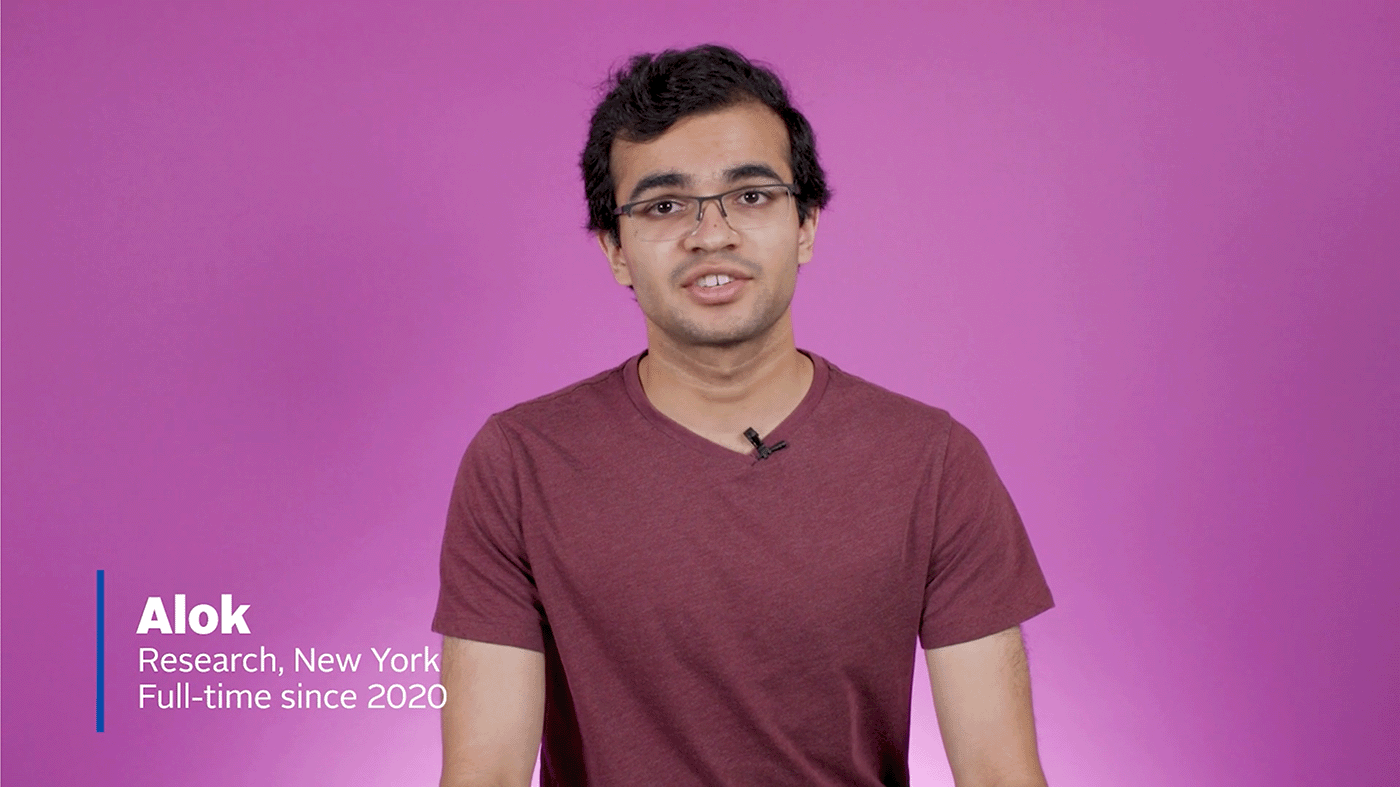

Researchers at Jane Street are responsible for analyzing large datasets using a variety of machine learning techniques, building and testing models, creating new trading strategies and writing the code that implements them.

Join the team that makes it happen.

ApplyWork functionally

Be able to apply logical and mathematical thinking to all kinds of problems. Asking great questions is more important than knowing all the answers.

Write great code. We mostly write in OCaml, so you should want to learn functional programming if you don’t already have experience with it.

Have good taste in research. The problems we work on rarely have clean, definitive answers. You should be comfortable pushing in new and unknown directions while maintaining clarity of purpose.

Think and communicate precisely and openly. We believe great solutions come from the interaction between diverse groups of people across the firm.

Before the interviews

When you submit your application to Jane Street for any role, it’s reviewed by an actual human. We respond to every application we receive, ideally within a week or so. Depending on your background we might also suggest you consider another position. We’ll always be up front with you about what roles you’re being considered for (or aren’t), so please also let us know what you’re interested in throughout the process.

If you’ve applied or interviewed before don’t let that stop you from trying again! We reconsider people all the time—people add to their experiences and our needs often change as Jane Street grows—so if it didn’t work out a couple of years ago you might have a better chance this go-around.

Research Internships

Our internship program is a great way to get to know us and it also lets us get to know how you think, work alongside us and solve problems. Interns are tasked with working on projects to help us refine our computational methods and improve our ability to analyze market data. These are true research projects in the sense that we ask our interns to investigate difficult questions to which we do not know the answers.

Here are some questions past interns have considered:

When numerically integrating many different (but related) functions, is there some transformation of the problem that allows some expensive part of the computation to be shared?

Common robust regression techniques, implemented naively, require “remembering” all of the data. Our data sets are often too big for this. How can we accomplish the same thing with a low memory footprint?

Sometimes the markets behave strangely in a way that is obvious to humans. Can we get a computer to recognize these situations?

Many beloved statistical methods assume the world is static, and that observations are independent, but these assumptions don’t hold in practice. What can we do about it?

An aspect of optimizing the computational performance of our trading systems is NP-complete. How do we get a near-optimal solution in spite of this?

How should we measure the market impact of our own trading?

How can we best combine historical returns and options market data to infer joint distributions of asset prices?

How can we efficiently filter Twitter feeds down to market-relevant content?